Momentum builds on federal oversight of facial recognition tech after reported abuses

Lawmakers in the House and Senate are considering legislation that would halt the use of facial recognition and biometric data collection tools by federal law enforcement, signaling that the controversial technologies may soon be subject to oversight after years of debate and revelations about its role in discriminatory policing.

The Facial Recognition and Biometric Technology Moratorium Act, reintroduced in June by Sen. Ed Markey (D-Mass.) and Rep. Pramila Jayapal (D-Wash.), would fully ban the use of facial recognition and biometric technology by federal agencies, barring a lift by Congress. It would also block funding to state and local law enforcement who do not cease use of the tech. The bill would allow cities and states to keep and make their own laws.

More than 40 privacy and civil liberties groups have thrown their weight on the Hill and organizing power behind the Biometric Technology Moratorium Act, saying that cases in which law enforcement use of facial recognition has violated American’s privacy and legal rights have worsened.

“What we do not know towers over what we do and it needs to change,” Rep. Sheila Jackson Lee (D-Texas), chairwoman of the House Judiciary Subcommittee on Crime, Terrorism, and Homeland Security said at a hearing on the subject Tuesday.

“Seemingly little thought has been put in the widespread adoption of technology that can materially alter community interaction with law enforcement. This is the issue this committee hopes to correct today and going forward.”

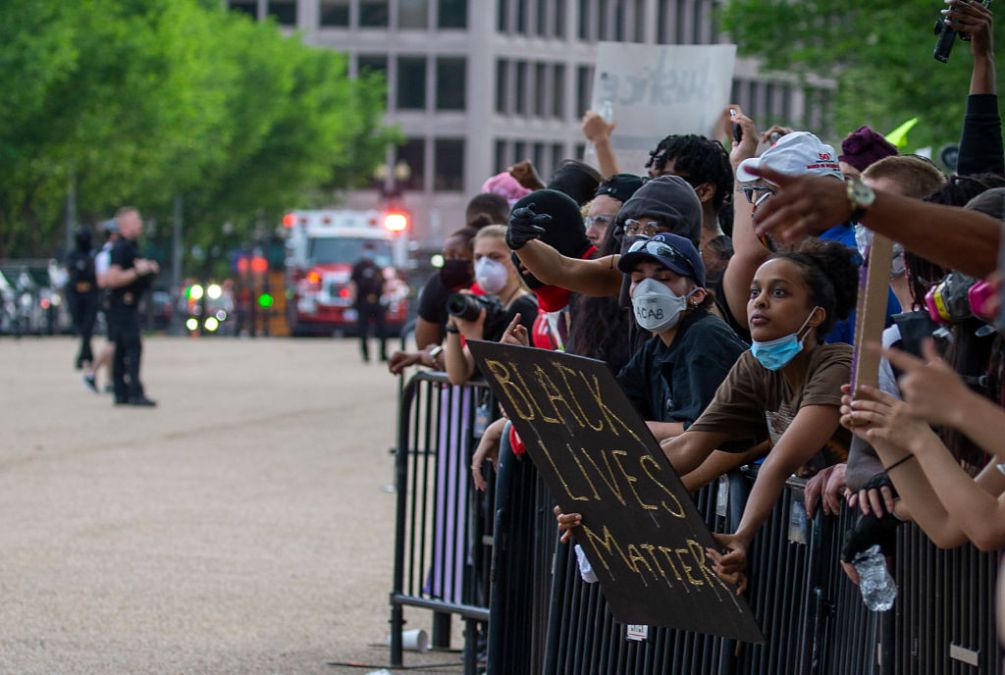

The issue has gained momentum in light of high-profile uses of the technology, which has traditionally been obscured by a lack of oversight. Federal law enforcement used the technology on protesters after the police murder of George Floyd, according to a government accountability report released in June. The FBI also used the technology to find rioters who stormed the Capitol building on January 6, according to the same report. There also are at least three known instances of Black men being arrested based on false positives by the technology.

“Law enforcement agencies and tech companies have weaponized facial recognition technology for too long, repeatedly putting Black people’s safety and our civil rights in harm’s way,” Jade Magnus Ogunnaike, senior campaigns director at Color Of Change, a racial justice organization, told CyberScoop. “The work to ban facial recognition technology is an urgent racial justice issue, and we call on members of Congress to support this legislation and bring the bill to the floor for a full vote.”

While the latest instances of abuse are new, warnings from advocates are not. Nor are discussions in Congress, which between 2019 and 2020 hosted a series of House Oversight hearings on the technology. The redux of hearings on the matter has already sparked concerns that legislation reining in the technology will again flounder in committee as it did last summer.

“We don’t have time to debate about ‘regulatory standards’ that will ultimately fail to reduce the harm of this fundamentally discriminatory technology,” Evan Greer, director of Fight for the Future, wrote in a statement. “Lawmakers need to do their jobs right now and pass the ‘Biometric Technology Moratorium Act.'”

The ramifications of misuse aren’t theoretical

Robert Williams testified in front of subcommittee members on Tuesday about his wrongful arrest by the Detroit police in 2020 after an incorrect facial recognition match.

Williams, who is now suing the department, spent 30 hours in custody and had to waive his right to counsel before seeing the picture used to match him in connection with an investigation into the theft of watches from a Detroit store.

Multiple studies have shown that, even in ideal lab conditions, facial recognition technology disproportionately results in false positives when used on people of color. A 2019 government study showed that facial recognition technology produces false positives for Asian and Black Americans at a rate of up to 100 times more than false positives for white people.

Some facial recognition algorithms misclassified Black women 35% of the time, compared to minimal false positives for white men, according to findings from scholars at the Massachusetts Institute of Technology.

False positives are likely higher in instances where police agencies rely on grainy images and unreliable databases, experts say.

Such a mistake was what happened with Williams, he told lawmakers during testimony Tuesday.

“I held that piece of paper up to my face and said, ‘I hope you don’t think all Black people look alike,'” Williams said.

He recalled the officer told him, “I guess the computer got it wrong.”

Charges against Williams were dropped two weeks later. The department’s chief called the arrest the result of “shoddy police work” but has declined to comment on the lawsuit.

Williams isn’t alone. At least two other Black men have been wrongfully arrested due to an incorrect facial recognition match.

There is no reliable figure that details how many of the roughly 18,000 law enforcement agencies in the U.S. deploy facial recognition technology, which typically returns results based on algorithms. At least 1,800 agencies have employees that have used or tested facial recognition software from Clearview AI, a company that built up its database by scraping social media without user permission, according to BuzzFeed News. A Government Accountability Office report issued last month warned that U.S. federal agencies are failing to track which facial recognition technology their employees are using, potentially exposing the public to risk.

Meanwhile, law enforcement facial recognition networks include roughly one in two American adults, Georgetown Law’s Center on Privacy and Technology has found.

“I think the issues we’ve discussed today are not just accuracy with the technology,” said Rep. Andy Biggs (R-Ariz.), the top Republican on the subcommittee. “I think we’re talking about a total invasion of privacy that is absolutely unacceptable and at some point is going to give too much power to the state and we have seen it already in [Mr. William’s case] and others.”

Not everyone is interested in a full ban

Opponents to a ban say that, with proper regulation and standards, the technology can be a powerful tool in cases such as finding missing children or tracking down suspected terrorists.

“It is nearly impossible to ban technology, but we can regulate it,” Rep. Ted Lieu (D-Calif.) said at the Tuesday hearing.

Lieu intends to circulate legislation that he says would require a warrant to use the facial recognition tools in most cases, prohibit use of the technology on First Amendment activity, and set auditing and bias testing standards. Lieu’s office didn’t return a request for details on the bill.

A warrant could be useful in “some circumstances,” such as requiring police to get a warrant before even using the technology to make a match, testified Barry Friedman, a law professor and director of the Policing Project at New York University. Even then, Friedman and other witnesses argued that facial recognition should not be the primary means of determining guilt.

Still, even limiting the use of the technology, or requiring better accuracy ignores underlying issues of over-policing, Bertram Lee, counsel for tech policy in the Leadership Conference on Civil and Human Rights argued to Congress on Tuesday.

“These technologies are already being used in communities that are being over-policed or over-surveilled,” he said.

Action isn’t limited to Capitol Hill

As Congress dithers, proponents of facial recognition bans have made significant strides at the state and local levels. Massachusetts, Utah, Washington, Vermont, Virginia and most recently Maine as well as 19 cities and counties have passed legislation to limit that use to some extent. Advocates have also pressed the White House to enact a moratorium.

Some advocates have taken aim at private companies behind the technology. Amazon extended its moratorium on the sale of the technology to the police in May after a backlash. On Wednesday, more than 35 organizations signed onto a campaign led by Fight for The Future calling for retailers to not use facial recognition on customers or workers in their stores.